Twaweza means “we can make it happen” in Swahili. Twaweza works on enabling citizens to exercise agency and governments to be more open and responsive in Tanzania, Kenya and Uganda.

Putting citizens at the centre

SAUTI ZA WANANCHI

Sauti za Wananchi offers, in particular to policy-makers, the media and the public, unique access to data that provide insight into the real-time experiences and views of citizens.

ANNUAL REPORT 2023

Read inspiring stories about how citizens in Uganda and Tanzania are building trust with their leaders. In Kenya, you will learn about a unique opportunity where we influenced the national agenda.

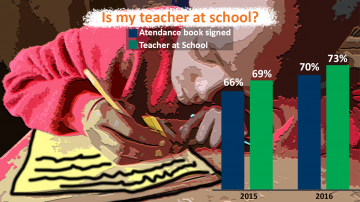

KIUFUNZA

Motivating teachers through measuring and rewarding individual performance - cash for results.